Does anyone know of an application that can convert audio to text?

-

I assume it is spoken text. Which language is that text in? – Martin Ueding Jul 09 '12 at 11:33

-

The speech text is in simple english. – Kopano Jul 09 '12 at 14:33

5 Answers

The software you can use is Vosk-api, a modern speech recognition toolkit based on neural networks. It supports 7+ languages and works on variety of platforms including RPi and mobile.

First you convert the file to the required format and then you recognize it:

ffmpeg -i file.mp3 -ar 16000 -ac 1 file.wav

Then install vosk-api with pip:

pip3 install vosk

Then use these steps:

git clone https://github.com/alphacep/vosk-api

cd vosk-api/python/example

wget https://alphacephei.com/kaldi/models/vosk-model-small-en-us-0.3.zip

unzip vosk-model-small-en-us-0.3.zip

mv vosk-model-small-en-us-0.3 model

python3 ./test_simple.py test.wav > result.json

The result will be stored in json format.

The same directory also contains an srt subtitle output example, which is easier to evaluate and can be directly useful to some users:

python3 -m pip install srt

python3 ./test_srt.py test.wav

The example given in the repository says in perfect American English accent and perfect sound quality three sentences which I transcribe as:

one zero zero zero one

nine oh two one oh

zero one eight zero three

The "nine oh two one oh" is said very fast, but still clear. The "z" of the before last "zero" sounds a bit like an "s".

The SRT generated above reads:

1

00:00:00,870 --> 00:00:02,610

what zero zero zero one

2

00:00:03,930 --> 00:00:04,950

no no to uno

3

00:00:06,240 --> 00:00:08,010

cyril one eight zero three

so we can see that several mistakes were made, presumably in part because we have the understanding that all words are numbers to help us.

Next I also tried with the vosk-model-en-us-aspire-0.2 which was a 1.4GB download compared to 36MB of vosk-model-small-en-us-0.3 and is listed at https://alphacephei.com/vosk/models:

mv model model.vosk-model-small-en-us-0.3

wget https://alphacephei.com/vosk/models/vosk-model-en-us-aspire-0.2.zip

unzip vosk-model-en-us-aspire-0.2.zip

mv vosk-model-en-us-aspire-0.2 model

and the result was:

1

00:00:00,840 --> 00:00:02,610

one zero zero zero one

2

00:00:04,026 --> 00:00:04,980

i know what you window

3

00:00:06,270 --> 00:00:07,980

serial one eight zero three

which got one more word correct.

Tested on vosk-api 7af3e9a334fbb9557f2a41b97ba77b9745e120b3.

- 31,462

- 533

-

also, as an addition to this answer, there's a cool demo of both

speech recognitionandvoice commandtools here: https://www.youtube.com/watch?v=gr1FZ2F7KYA&list=PLvB_ffZs45ufhOnw1epfjncXYRa5pfGw8&index=1 – Daithí Jan 08 '15 at 10:22 -

-

You just download it and unpack, there is no such thing as "add to the system" – Nikolay Shmyrev Feb 08 '15 at 13:56

-

@NikolayShmyrev Where should I unpack it so that pocketsphinx_continuous finds it? – jarno Feb 08 '15 at 14:14

-

You can unpack it anywhere, pocketsphinx does not look in any location automatically, you have to specify model path in -hmm argument as pointed in the answer above. – Nikolay Shmyrev Feb 08 '15 at 14:33

-

4Well, I installed packages pocketsphinx-utils, pocketsphinx-hmm-en-hub4wsj and pocketsphinx-lm-en-hub4 in universe repository of Ubuntu 14.04. Then

pocketsphinx_continuous -infile file.wav -hmm en_US/hub4wsj_sc_8k -lm en_US/hub4.5000.DMP 2> pocketsphinx.logworked. Maybe they are not optimal packages, but they were best matches I could find in the repositories. – jarno Feb 08 '15 at 15:05 -

-

1They are VERY unoptimal packages, it is not recommended to use them unless you want to complain about bad accuracy. It is better to install pocketsphinx from github, ubuntu version is very much outdated. – Nikolay Shmyrev Feb 08 '15 at 17:59

-

brew has this package but the quality is terrible. Also wondering if there's a way to insert line breaks at pauses so I can basically get paragraphs when speaker pauses. – chovy Mar 09 '17 at 07:57

I know this is old, but to expand on Nikolay's answer and hopefully save someone some time in the future, in order to get an up-to-date version of pocketsphinx working you need to compile it from the github or sourceforge repository (not sure which is kept more up to date). Note the -j8 means run 8 separate jobs in parallel if possible; if you have more CPU cores you can increase the number.

git clone https://github.com/cmusphinx/sphinxbase.git

cd sphinxbase

./autogen.sh

./configure

make -j8

make -j8 check

sudo make install

cd ..

git clone https://github.com/cmusphinx/pocketsphinx.git

cd pocketsphinx

./autogen.sh

./configure

make -j8

make -j8 check

sudo make install

cd ..

Then, from: https://sourceforge.net/projects/cmusphinx/files/Acoustic%20and%20Language%20Models/US%20English/

download the newest versions of cmusphinx-en-us-....tar.gz and en-70k-....lm.gz

tar -xzf cmusphinx-en-us-....tar.gz

gunzip en-70k-....lm.gz

Then you can finally proceed with the steps from Nikolay's answer:

ffmpeg -i book.mp3 -ar 16000 -ac 1 book.wav

pocketsphinx_continuous -infile book.wav \

-hmm cmusphinx-en-us-8khz-5.2 -lm en-70k-0.2.lm \

2>pocketsphinx.log >book.txt

Sphinx works alright. I wouldn't rely on it to make a readable version of the text, but it's good enough that you can search it if you're looking for a particular quote. That works especially well if you use a search algorithm like Xapian (http://www.lesbonscomptes.com/recoll/) which accepts wildcards and doesn't require exact search expressions.

Hope this helps.

- 1,224

-

4every thing works like a charm but in my case i had to run following command to fix

pocketsphinx_continuous: error while loading shared libraries: libpocketsphinx.so.3: cannot open shared object file: No such file or directory------->export LD_LIBRARY_PATH=/usr/local/lib------->export PKG_CONFIG_PATH=/usr/local/lib/pkgconfig– Vijay Dohare Sep 19 '17 at 11:30 -

This is also recommended at https://cmusphinx.github.io/wiki/tutorialpocketsphinx/#installation-on-unix-system – andrybak Sep 19 '19 at 21:58

I you are looking to convert speech to text you could try installing the julius package:

sudo apt install julius

Description:

"Julius" is a high-performance, two-pass large vocabulary continuous speech recognition (LVCSR) decoder software for speech-related researchers and developers.

Or another option that isn't in Ubuntu's repositories or in the Snap Store is Simon:

... is an open-source speech recognition program and replaces the mouse and keyboard.

Reference Links:

Julius:

Simon:

-

2Could you add to this answer an example of how to run Julius? It is phenomenally unclear from the documentation. – Mar 07 '20 at 00:17

-

In the future, it would be better to post one answer for the software Julius and one answer for the software Simon, so that each answer can be voted on and commented on separately. – Flimm Jul 31 '23 at 07:22

You can use Mozilla DeepSpeech is an opensource speech-to-text tool. But you will need to train the application or download Mozilla's pre-trained model. For my project, the accuracy was still not sufficient, as audio files were not good quality, and used Transcribear instead, a web based editor with speech-to-text capabilities, but you will need to be connected online to upload recordings to the Transcribear server.

- 81

-

I am considering transcribing about 70 hours by one speaker (accented, clear non-native en). Can DeepSpeech be trained using the existing mp3 files. – LenB Apr 26 '20 at 19:18

-

In the future, it would be better to post one answer for Mozilla DeepSpeech, and one answer for Transcribear, so that each answer can be voted on and commented on separately. – Flimm Jul 31 '23 at 07:22

-

Mozilla DeepSpeech hasn't seen any development ever since Mozilla fired the DeepSpeech team. See this issue for more details: github.com/mozilla/DeepSpeech/issues/3693 – Flimm Aug 23 '23 at 13:24

vosk-transcriber official CLI from Vosk

I was randomly tab completing after installing Vosk today, previously mentioned at: https://askubuntu.com/a/423849/52975 when I saw they had added a nice CLI wrapper at last, so now tested on Ubuntu 23.10, you can install with the English model as:

pipx install vosk

mkdir -p ~/var/lib/vosk

cd ~/var/lib/vosk

wget https://alphacephei.com/vosk/models/vosk-model-en-us-0.22.zip

unzip vosk-model-en-us-0.22.zip

cd -

and then use as:

wget -O think.ogg https://upload.wikimedia.org/wikipedia/commons/4/49/Think_Thomas_J_Watson_Sr.ogg

vosk-transcriber -m ~/var/lib/vosk/vosk-model-en-us-0.22 -i think.ogg -o think.srt -t srt

-i will eat pretty much anything including compressed audio files like .ogg or even video files like .ogv, presumably FFmpeg at work.

Nice! Now they only need a vosk-transcriber --download-model en option and have a default -m directory to finally make things fully clean, but this is already a huge improvement of life.

I played with a few examples to informally evaluate accuracy at: https://unix.stackexchange.com/questions/256138/is-there-any-decent-speech-recognition-software-for-linux/613392#613392

OpenAI Whisper

https://github.com/openai/whisper

Tested on Ubuntu 24.04, install:

sudo apt install ffmpeg

pipx install openai-whisper==20231117

Sample usage:

wget https://upload.wikimedia.org/wikipedia/commons/f/f6/Appuru.wav

time whisper Appuru.wav

Terminal output with this perfectly clean en-US demo: https://commons.wikimedia.org/wiki/File:Appuru.wav

/home/ciro/.local/pipx/venvs/openai-whisper/lib/python3.12/site-packages/whisper/transcribe.py:115: UserWarning: FP16 is not supported on CPU; using FP32 instead

warnings.warn("FP16 is not supported on CPU; using FP32 instead")

Detecting language using up to the first 30 seconds. Use `--language` to specify the language

Detected language: English

[00:00.000 --> 00:03.000] The apple does not fall far from the tree.

real 0m7.516s

user 0m31.209s

sys 0m4.194s

and cwd now contains several output files such as Appuru.srt:

1

00:00:00,000 --> 00:00:03,000

The apple does not fall far from the tree.

so it worked perfectly.

Here I did a longer benchmark with a video: Automatically generate subtitles/close caption from a video using speech-to-text? wand it worked amazingly!

https://unix.stackexchange.com/questions/256138/is-there-any-decent-speech-recognition-software-for-linux/718354#718354 by Franck Dernoncourt reports that to make use of a Nvidia 3090 GPU, add the following after conda activate whisperpy39:

pip install -f https://download.pytorch.org/whl/torch_stable.html

conda install pytorch==1.10.1 torchvision torchaudio cudatoolkit=11.0 -c pytorch

OpenAI Whisper-based tools

A list: https://github.com/sindresorhus/awesome-whisper#cli-tools learned from: Automatically generate subtitles/close caption from a video using speech-to-text? Perhaps some of those will make the model a bit easier to use.

Speech Note

https://github.com/mkiol/dsnote

This project is a front-end for a bunch of possible backend TTS and STT models on multiple languages. Install and launch:

flatpak install flathub net.mkiol.SpeechNote

flatpak run net.mkiol.SpeechNote

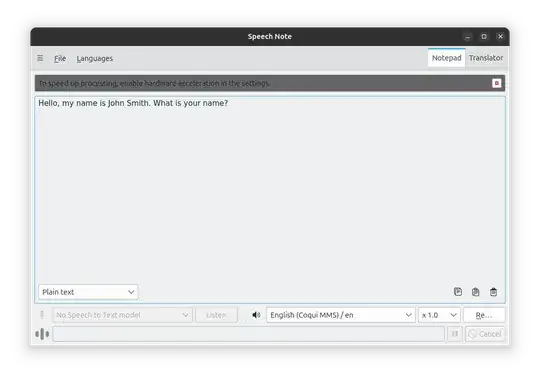

opens a GUI:

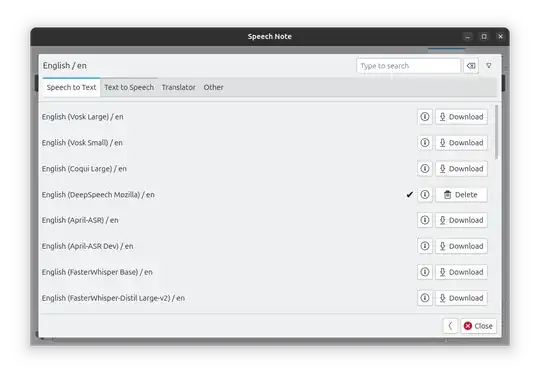

Then under:

- Languages

- English

- Text to Speech

I can download a model:

They have both Whisper and Vosk and a few others.

Then you can either:

- Click "Listen" to take voice input from the microphone

- File > Import from a file to select a sound file containing the speech

and the recognized text will appear in the text box.

CLI-only usage is limited unfortunately: https://github.com/mkiol/dsnote/issues/83

Tested on Speech Note 4.7.0, Ubuntu 24.10.

Benchmarks

https://github.com/Picovoice/speech-to-text-benchmark mentions a few:

- LibriSpeech. This one is also part of MLPerf v3.1.

- TED-LIUM

- Common Voice

- 31,462